Edge AI Assistant: Powering Private, Real-Time API Experiences

Organisations involved

Main Participant: ArtificaX develops next-generation AI assistants that can plan, act and complete tasks. The company combines research in intelligent agent behaviour with scalable engineering to build automation systems for industry and enterprise. High-Performance Computing supports their work in model training and synthetic data generation.

Turkcell Global Bilgi is Türkiye’s leading digital customer-experience provider, offering contact centre and digital-support services across telecommunications finance, retail and public sectors.

The challenge

While large language models have changed how people search and ask questions, most are still limited to dialogue and do not execute actions. The next step in AI is building assistants that can act on a user’s behalf, making bookings, checking bills, updating records, and handling everyday tasks through digital services. This shift is creating a new market where people expect assistants that are useful, safe, and trustworthy, not just conversational.

ArtificaX set out to lead this emerging field by creating an assistant that can understand instructions, decide what action is needed, and call external services to complete the task. To do this reliably, the models must learn from large numbers of realistic, multi-step dialogues containing many types of actions. Generating, testing, and validating this data, however, requires significant computing power. As a startup, ArtificaX could not access or afford the scale needed to train and distil models capable of running accurately on devices.

The team required HPC resources to create synthetic, action-rich dialogues, train models that understand tool use, and distil them into smaller models suitable for phones, tablets, and embedded systems. By utilising HPC, the company reduced the financial and development risks, making it possible to bring an on-device assistant to market faster.

The Solution

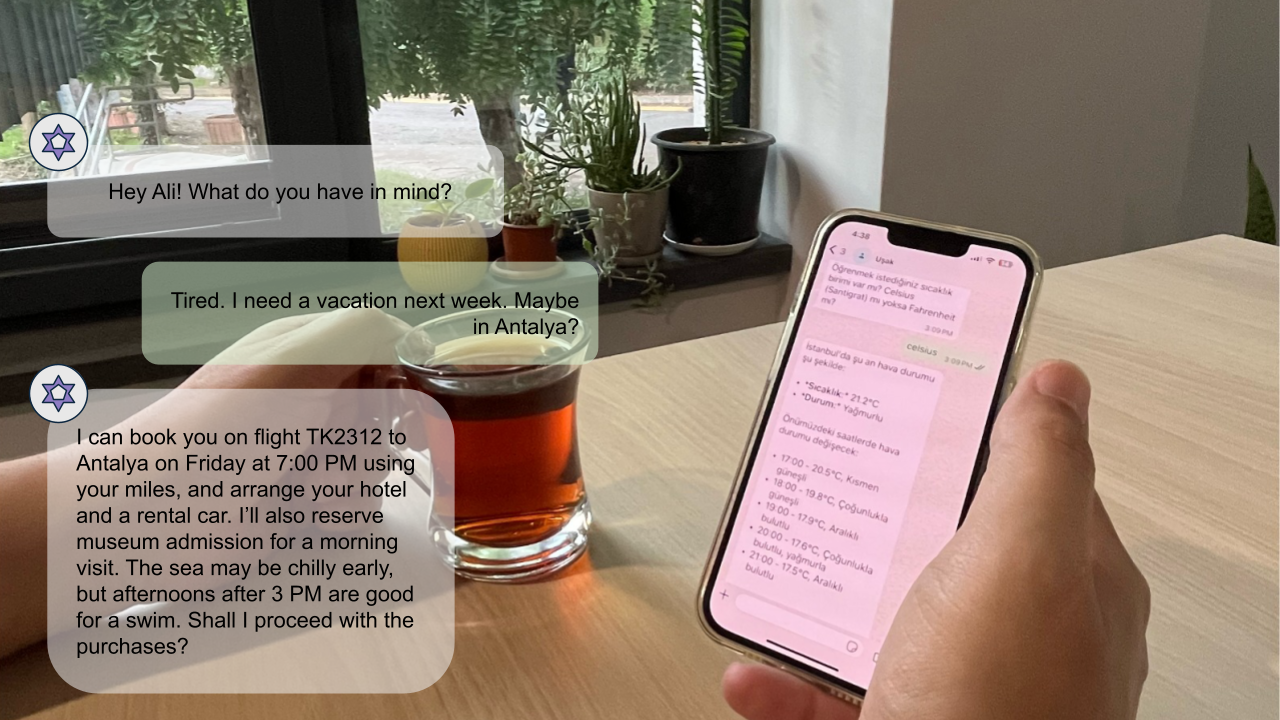

ArtificaX built an assistant that acts on the instructions given to it. Using the HPC infrastructure MareNostrum5, they generated large sets of realistic synthetic dialogues to teach the model how to use external services for tasks such as booking, buying and updating information. Each action was validated in a safe test environment to ensure the assistant would behave correctly. The team then distilled the high-capacity model into efficient 8-billion and 4-billion-parameter versions that run on phones, tablets and vehicles. Most processing happens on the device for privacy reasons, with cloud access used only when necessary. The result is a customisable assistant that executes real-world tasks with reliable confirmations and strong personal data safety controls.

Impact

The project proved that compact on-device models can complete multi-step tasks reliably, giving ArtificaX a core component for commercial agentic applications. This reduced technical risk, strengthened the product roadmap and accelerated market entry with a privacy-controlled assistant. Customers can automate routine work in customer support, finance, sales and operations while reducing cloud costs and easing compliance by keeping sensitive data on device. This improves competitiveness, increases customer loyalty and attracts new partners.

For users, the assistant keeps data local by default and asks for explicit consent before acting. Everyday tasks such as checking bills, managing appointments or making purchases become easier and less time-consuming. Local processing also helps users in areas with weak connectivity and voice access supports digital inclusion.

Finally, processing intelligence on device reduces data-centre usage, lowering energy consumption at scale and therefore the environmental footprint.

Benefits

- Efficient 8B and 4B parameter models that deliver high task-completion accuracy.

- On-device processing reduces cloud compute reliance.

- Fast response times on leading devices, enabling smooth real-time interactions.

- Reliable task execution with clear confirmations, cutting task time by up to 57%.

- A repeatable build and evaluation pipeline enables new services to be added in days.