Enhancing Explainable AI for Investment Management with Large Language Models

Organisations involved

Main Participant: Axyon AI is an Italian fintech SME providing AI-powered predictive insights for quantitative investment strategies.

Technology Expert: UNIMORE–AImageLab at the University of Modena and Reggio Emilia specialises in deep learning and multimodal AI, contributing expertise in model training and evaluation.

The challenge

The investment management sector increasingly depends on quantitative AI models to support forecasting, portfolio construction and risk analysis. However, as these models have become more sophisticated, transparency has not kept pace. Firms often struggle to explain why a model behaves as it does, particularly when relying on complex explanation techniques such as Shapley Additive exPlanations - SHAP values, which attribute a model’s prediction to individual features relative to an average baseline, as well as other feature attribution methods applied to multivariate forecasting models. While these tools offer valuable insight for specialists, they remain inaccessible to many institutional clients, regulators and internal stakeholders who require clarity, auditability and defensible reasoning to trust AI-driven recommendations.

Axyon AI already generates rich predictive outputs and interpretable features, but translating this technical information into clear, consistent, readable narratives remained a major bottleneck. Analysts spent significant time preparing commentaries and justifications for investment decisions, and compliance teams needed explanations that could be reviewed, documented and communicated efficiently. The challenge was therefore not only scientific—improving explainability—but also commercial: enhancing transparency was essential to unlock regulated market segments, accelerate adoption, and strengthen the credibility of AI-powered investment processes.

The Solution

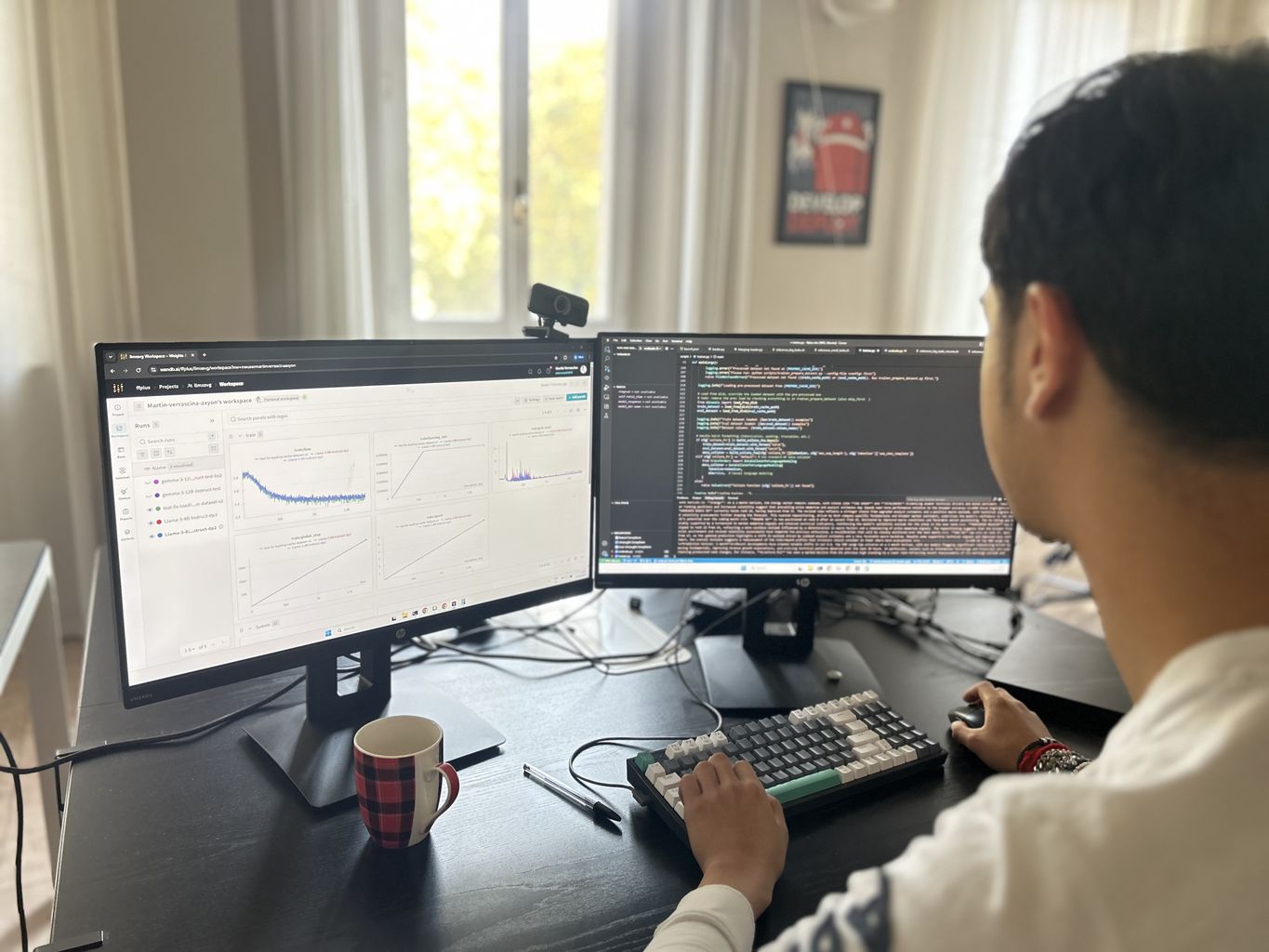

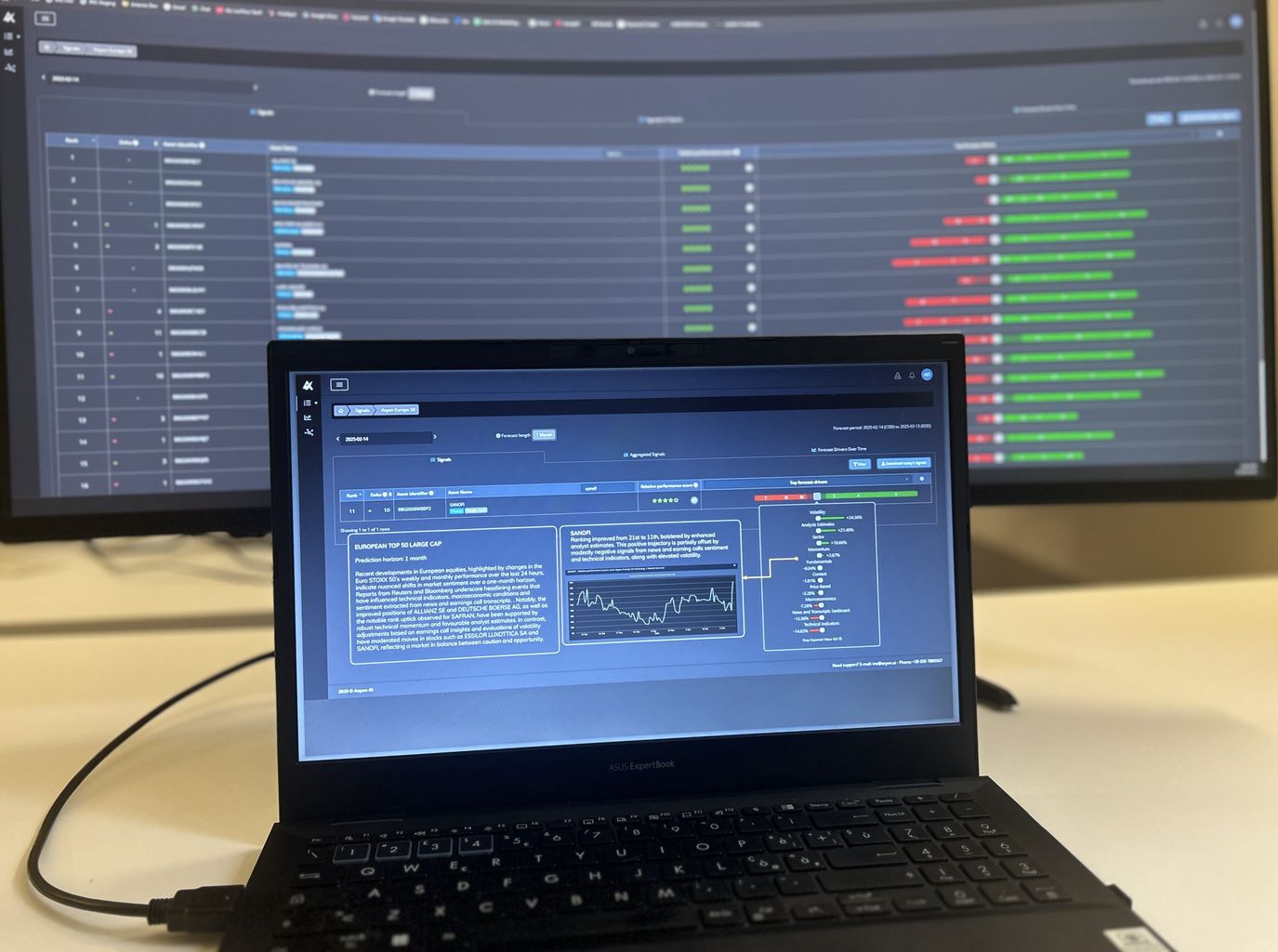

By utilising HPC, partners developed a specialised financial-domain LLM designed to convert structured quantitative outputs, forecast signals, SHAP attributions, asset metadata and market context into clear, human-readable explanations. The team benchmarked fifteen open and commercial models using a unified evaluation pipeline and LLM-as-a-Judge scoring to identify the strongest candidates. Selected mid-sized open-weight models were then fine-tuned using parameter-efficient techniques such as LoRA, mixed precision and memory-optimised training strategies. This HPC-accelerated workflow enabled rapid iteration, significant quality gains and the integration of an explainability prototype directly into Axyon IRIS.

Impact

Clearer AI explanations strengthen trust in automated investment processes and improve transparency for end-investors. Similarly, parameter-efficient training and shared HPC resources reduce compute demand compared to full model retraining, thereby lowering energy use across experimentation cycles.

Benefits

- Up to +55% improvement in explanation quality vs. base models, integrated into an explainability prototype in IRIS.

- Market expansion from ~10% to ~25% of addressable firms and potential increase of up to 15% of Average Revenue Per User.

- Efficiency gains: ~1 FTE-month saved per client/year (~€6k), rising from €90k to €420k/year as adoption scales.

- 48,000 EuroHPC GPU-hours enabled multi-node PEFT training and rigorous model benchmarking.

- Stronger ecosystem: deepened collaboration with UNIMORE–AImageLab and NCC Italy, paving the way for future co-development and knowledge transfer across modelling, evaluation and HPC workflows.